Deep Learning

From Hand-Crafted Rules to Data-Driven Intelligence

The Old Approach: Hand-Crafted Features + Rules

To appreciate what ResNet brings, it helps to understand how limited the old technology was.

A single banknote CIS image is approximately 1,500 × 7,500 pixels — 11.25 million pixels. The old system used multiple approaches for different tasks, each sampling only a small fraction of this data:

| Task | Method | Features |

|---|---|---|

| Denomination classification | SVM on ROI (region of interest) | Average values of rectangular areas in selected regions |

| Counterfeit detection (specific areas) | SVM | 19 × 6 = 114 features (average value per rectangle) |

| Counterfeit detection (full area) | SVM | 22 × 5 = 110 features (average value per rectangle) |

| Counterfeit detection (primary) | Rule-based programming | Hand-written threshold checks per currency |

Each SVM feature was the average value of a rectangular area on the banknote image — reducing millions of pixels to a few hundred numbers. ROI (region of interest) was applied only for denomination and currency detection, while counterfeit detection SVMs analyzed predefined areas across the note.

However, the primary method for counterfeit detection was rule-based programming — hand-written threshold checks and conditional logic crafted by engineers for each known counterfeit type. This is precisely why a new software release was required every time a new counterfeit appeared in the market: engineers had to analyze the new counterfeit, determine its characteristics, and write new rules to detect it.

The fundamental limitation: whether SVM or rule-based, the old approach sampled only a tiny fraction of the available image data and relied on human judgment to determine what to look for. The vast majority of pixel information was discarded before classification even began.

ResNet: How Deep Learning Sees a Banknote

ResNet (Residual Network) does not separate feature extraction from classification. It learns both simultaneously through multiple layers, each building on the previous one:

| Layer | What It Learns | Human Analogy |

|---|---|---|

| Early layers (1–5) | Low-level features: edges, lines, color gradients, texture patterns | Noticing "something looks off" about the paper texture |

| Middle layers (6–20) | Mid-level features: ink patterns, micro-printing structures, security thread boundaries | Recognizing the security thread's weave pattern doesn't match |

| Deep layers (21–50+) | High-level features: holistic representations — how all elements relate across the full image | An expert examiner's "gut feeling" integrating everything |

| Final layer | Classification: denomination, counterfeit, fitness, tape — all at once | The examiner's final verdict |

No human engineer designed these features. The network discovers them automatically by training on thousands of banknote images — building increasingly abstract representations from raw pixels to edges to patterns to holistic understanding.

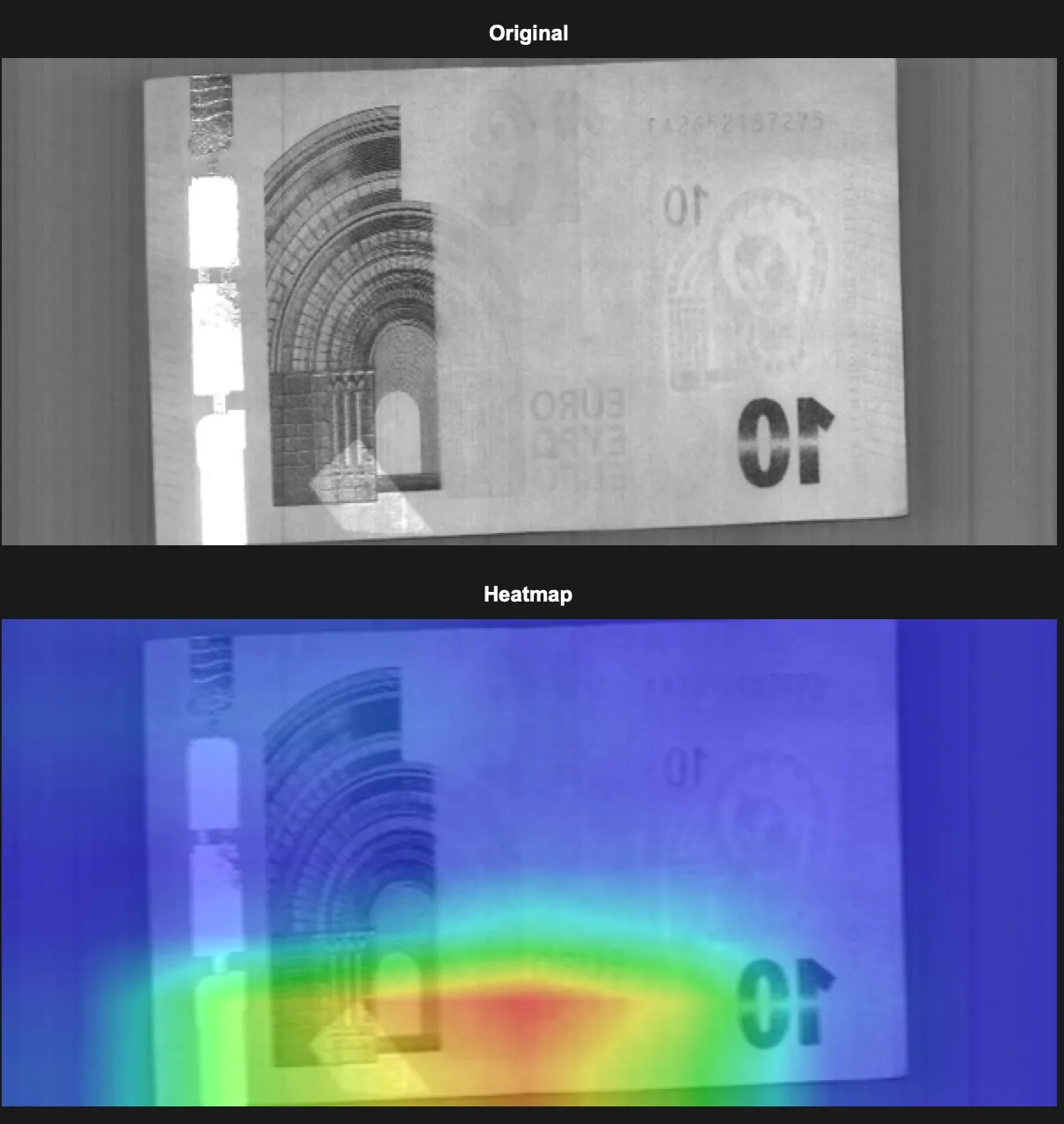

Seeing What the AI Sees: Attention Heatmap

Top: Original banknote image captured by CIS sensor. Bottom: AI attention heatmap — bright regions indicate where the neural network concentrates its analysis.

Notice what the AI is doing: it ignores the bright, reflective areas of the banknote (holographic patches, metallic ink) that would confuse a simple threshold-based system, and instead focuses precisely on the region where transparent scotch tape is attached. The network learned — entirely on its own — that these areas contain the anomaly worth detecting.

This is especially significant because transparent scotch tape is nearly invisible to the naked eye. Yet the combination of multi-spectral CIS imaging and ResNet's learned features makes tape clearly distinguishable from the note surface — even when it is small or placed at the very edge of the note.

With the old technology's 12 mechanical channels, this level of detection was physically impossible. A 12-channel sensor provides roughly one measurement per centimeter — a small piece of tape can fall entirely between two channels and go undetected. Worse, the leading edge (where tape is most commonly placed) was a blind zone due to mechanical oscillation. The AI + CIS approach delivers 1,560 pixels across with no blind zones — a 130× improvement in resolution covering the entire note surface, including all edges.

From Hundreds of Features to Millions of Learned Features

| Old (Rule-based + SVM) | New (ResNet) | |

|---|---|---|

| Input | ~224 hand-crafted features (multiple SVMs) + rule-based checks | 11.25M pixels × 5 modes = 56.25M values |

| Feature extraction | Manual, by human engineers | Automatic, learned from data |

| Features per layer | N/A (single-step) | Hundreds to thousands of feature maps |

| Learned parameters | ~hundreds | ~millions |

| Feature types | Average values of rectangular areas | Edges, textures, shapes, patterns, spectral correlations |

| What it can detect | Only what humans thought to look for | Anything that exists in the image |

How This Improves Banknote Processing

For an edge AI classifier processing 1,000+ banknotes per minute, this difference is transformative:

No blind spots

The old system — even with both counterfeit SVMs combined (114 + 110 features) — sampled only a tiny fraction of the image. With ResNet analyzing every pixel across 5 spectral modes, there is nowhere to hide.

Multi-spectral correlation

The old system processed each spectral mode independently. ResNet learns how the 5 spectral modes relate to each other at every pixel position — genuine ink has a specific IR-to-UV ratio that counterfeits rarely replicate.

Speed without compromise

Despite analyzing ~165,000× more data (56.25M values vs. ~342 hand-crafted features), the M1076 NPU's 26 TOPS complete classification within the same time budget. Same speed, incomparably better accuracy.

Continuous improvement — by anyone

When a new counterfeit appears, the old system required a specialized engineer to analyze the counterfeit, write new rule-based detection logic, and release a software update — a process that took weeks or months. With ResNet, anyone who can correctly label banknote images can add new training samples and retrain the model in hours to days — the network discovers the distinguishing features automatically. No programming required.

Rule-Based Programming vs. Data-Driven Methodology

Rule-Based Programming

An engineer analyzes the problem, designs the logic, and writes explicit rules:

AND ir_ratio(zone_B) > 0.8

THEN denomination = $100

IF thickness_variation(ch_5) > threshold

THEN tape_detected = true

Every decision is predetermined by the engineer. The machine does not learn — it executes.

Data-Driven (Deep Learning)

Anyone who can properly label banknote images provides thousands of labeled examples, and the neural network discovers the rules by itself:

with labels (genuine/counterfeit,

denomination, fitness, tape)

↓

Neural network learns optimal features

↓

Classifies new banknotes automatically

No one writes classification rules. The data defines the logic. The only expertise needed is correctly labeling the banknotes — something any trained operator can do.

Comparison

| Rule-Based | Data-Driven (Deep Learning) | |

|---|---|---|

| Development | Manually analyze banknotes, identify features, write rules | Collect labeled data, train neural network |

| Expertise required | Deep domain knowledge + programming | Accurate labeling — no programming needed |

| Interpretability | High — every decision traceable to a rule | Lower — but explainability techniques (Grad-CAM) can visualize decisions |

| Dev time per currency | Weeks to months | Hours to days |

| Hardware requirement | Minimal — runs on low-power CPU | Requires dedicated AI accelerator (NPU) |

| Accuracy ceiling | Limited by human knowledge and sensor sampling | Limited only by data quality and model capacity |

| Edge cases | Each requires a new hand-written rule | Handled naturally if included in training data |

| Adaptability | Every change requires manual re-engineering | Retrain with new data → deploy |

| Scalability | Each new currency multiplies engineering effort | Each new task adds training data, not engineering effort |

| Failure mode | Predictable but brittle — fails on anything not anticipated | Graceful degradation — accuracy reduces gradually |

Why Data-Driven Is Superior for Banknote Processing

For general-purpose computing, rule-based programming works well. But banknote processing is fundamentally an image recognition problem — and this is where rule-based approaches break down:

1. The Problem Space Is Too Large for Human Rules

A single banknote image from the 5-mode CIS contains ~56 million data points. No engineer can write rules that account for the relationships among all of them. In practice, the old system sampled ~0.0004% and applied rules to that — discarding 99.9996% of the available information.

A neural network processes all 56 million data points and discovers patterns that no human would think to look for.

2. Banknotes Are Not Static — They Evolve

- New currencies are issued regularly (new designs, new security features, new denominations)

- New counterfeits appear constantly — often designed specifically to pass existing detection rules

- Fitness criteria change as central banks update their standards

- Regional variations exist — printing quality, paper condition, and wear patterns differ by country

With rule-based programming, every change requires a skilled engineer who can both analyze banknotes and write code. With data-driven methodology, anyone who can properly label banknotes — a trained operator, a dealer, a central bank technician — can collect new samples → label them → retrain → deploy. No engineering background needed.

3. The Accuracy Gap Is Insurmountable

Consider tape detection: the best rule-based result in the CBRF test is 64.58% — the highest in the industry after decades of engineering. The data-driven target is near 100%.

This ceiling reflects a fundamental limitation of indirect sensing combined with rule-based logic. No amount of additional rules can overcome the physical constraints of a 12-channel mechanical sensor.

4. Rule-Based Advantages Are Disappearing

- Low hardware cost — but the Zynq 1EG + M1076 combination is now affordable for commercial equipment

- Interpretability — but modern explainability techniques (Grad-CAM) can visualize what the network focuses on, and with the Mali GPU + external display, operators can see exactly why a note was rejected

- Predictability — but thorough validation on test datasets provides statistical confidence

5. A Real-World Analogy

Rule-based approach: Hand a new employee a checklist — "check watermark at position X, check security thread at position Y." They will catch counterfeits that fail these specific checks, but miss any counterfeit designed to pass the checklist.

Data-driven approach: Show them 50,000 genuine and counterfeit notes. After sufficient training, they develop a "feel" for authenticity — noticing subtle anomalies that no checklist could capture. Our NPU-based system is the machine equivalent of this trained intuition. And critically — the person who prepares the training notes doesn't need to be an engineer. They just need to correctly sort them into the right categories.

Beyond Tape Detection: Transforming Every Aspect

Tape detection is the first and most dramatic application. But the same full-image, deep learning approach fundamentally improves every function of the banknote counting machine.

| Function | Old (Rule-based + SVM) | New (CIS + NPU + ResNet) |

|---|---|---|

| Counterfeit detection | Rule-based thresholds + two SVMs (specific area + full area) | Analyze entire surface under 5 spectral modes |

| Composite note | Serial number comparison only | Direct visual detection of cut lines |

| Tape detection | 64.58% (CBRF best) | Target: near 100% |

| Fitness sorting | Binary (fit/unfit), coarse | Granular, defect-specific classification |

| Serial number OCR | Fixed position, fragile | Position-invariant, robust |

| New counterfeit response | New rules must be written per counterfeit type | Retrain with new samples and deploy |

| Orientation sorting | Basic feature matching | Full visual context, robust to damage |

| Security verification | Independent threshold checks | Holistic multi-spectral analysis |

Adaptive Learning — Getting Smarter Over Time, By Anyone

Perhaps the most transformative advantage: the NPU-based system improves continuously through model updates — without any hardware changes and without requiring software engineers.

The key insight: the only human expertise required is accurate labeling. If you can correctly identify a banknote as genuine or counterfeit, fit or unfit, taped or clean — you can build the training dataset. The neural network handles the rest. This means dealers, operators, or central bank staff can contribute directly to model improvement — and the entire cycle from labeling to a deployable model takes hours to days, not weeks or months.

We are planning to build a self-service model development system — a platform where dealers and qualified domain experts can create and train their own models directly, without any programming knowledge. Anyone with the right domain expertise to label banknotes accurately will be able to develop, validate, and deploy custom models tailored to their specific market and currency requirements.

- New type of counterfeit appears in the market → label new samples → retrain → deploy via remote upgrade

- Central bank changes fitness criteria → re-label accordingly → retrain → deploy

- New currency version released → collect and label new notes → fine-tune → deploy

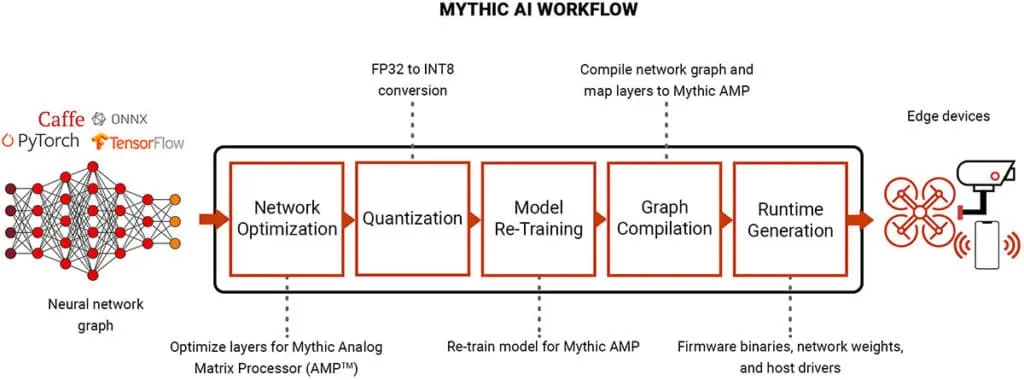

The Mythic AI workflow: from standard deep learning frameworks to optimized edge deployment. Models trained in PyTorch or TensorFlow are quantized and compiled for the M1076 NPU, then deployed to edge devices — enabling continuous model updates without hardware changes.