Analog NPU

Mythic M1076 — Bringing GPU Power to Edge Devices

Mythic M1076 at a Glance

- 26 TOPS AI performance (INT8)

- 3–4W power consumption

- 19 × 15.5 mm BGA package (~3 cm²)

- 76 AMP tiles, up to 80M weight parameters

- No external DRAM — weights stored on-chip in flash

- PCIe Gen2 x4 interface

The Problem: AI Needs a GPU, But GPUs Don't Fit

Deep learning requires massive parallel computation — the kind of work that GPUs excel at. But conventional GPUs are designed for data centers or PCs:

- Too expensive to embed in a banknote counting machine

- Too power-hungry — a typical AI-capable GPU draws 75–300 W

- Too large — requires active cooling and substantial board space

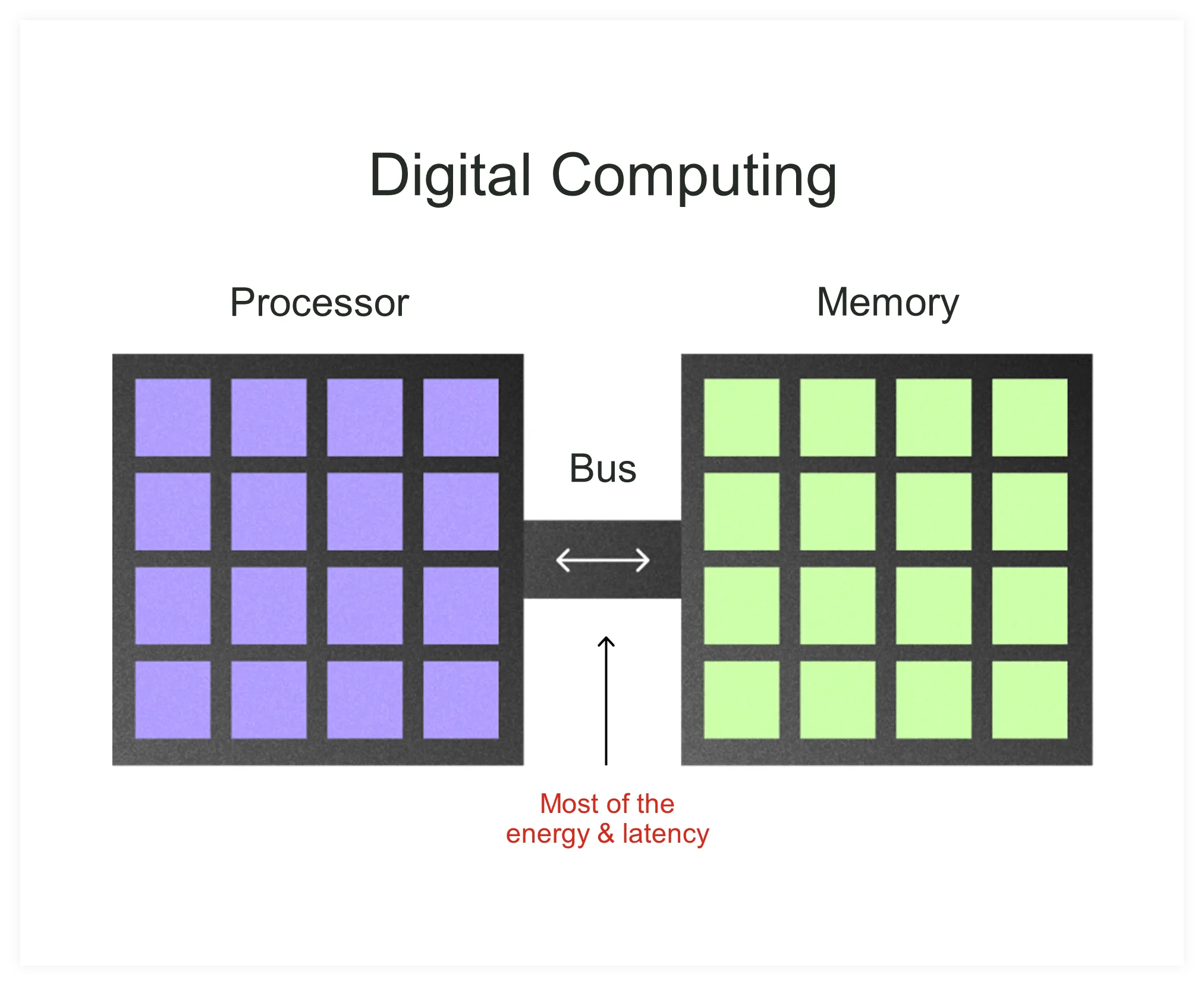

Digital AI accelerators for edge devices do exist, but they face a fundamental bottleneck: memory bandwidth. Neural network inference requires billions of multiply-accumulate operations, and in a traditional digital architecture, model weights must be constantly shuttled between external memory (DRAM) and the compute unit. This data movement consumes most of the power and limits throughput.

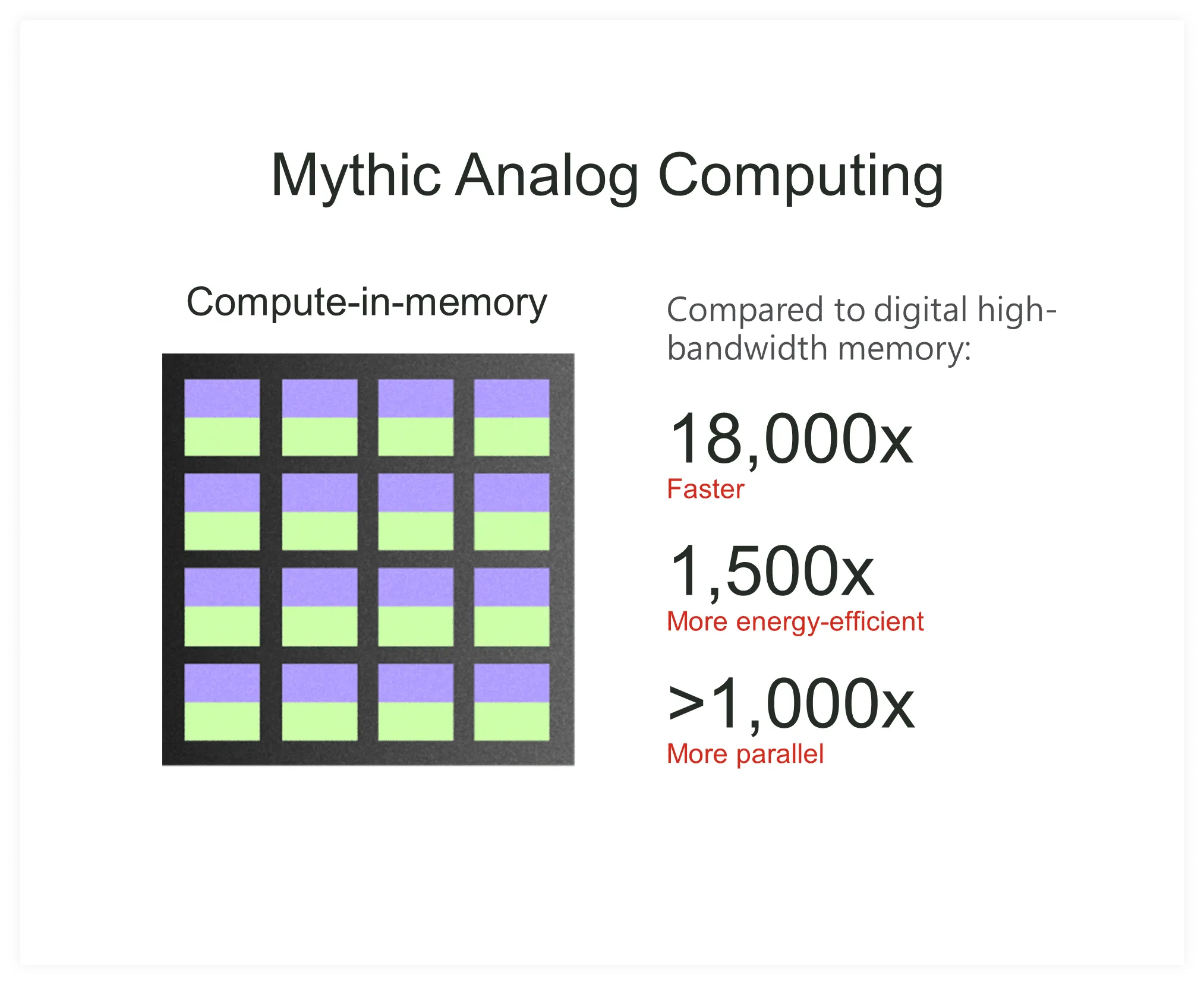

The Solution: Analog Compute-in-Memory

Mythic's M1076 Analog Matrix Processor takes a fundamentally different approach: it stores neural network weights directly in flash memory cells and computes using analog signals. Instead of moving data to the processor, computation happens where the data already resides.

Traditional: data moves between processor and memory

Mythic: computation happens where data resides

How it works:

- Model weights are stored as analog values in flash memory cells (76 AMP tiles, up to 80M parameters)

- Input signals are converted to analog voltages

- Matrix multiplication happens through the physics of the circuit itself — Ohm's law (V=IR) and Kirchhoff's current law perform the math

- Results are converted back to digital for the next layer

This eliminates the memory bottleneck entirely.

Analog Trade-off: Noise

The inherent challenge of analog computing is noise — analog signals are subject to thermal noise, process variation, and other imprecisions that digital circuits avoid by definition. This is why the industry moved away from analog computing decades ago.

However, neural networks are inherently noise-tolerant. Unlike traditional algorithms where a single bit error causes failure, neural networks are designed to generalize from noisy data. Mythic exploits this property: the small inaccuracies introduced by analog computation fall within the tolerance of the neural network, so the final classification result remains accurate.

Performance Comparison

To understand where the M1076 fits, consider a comparison with the NVIDIA Jetson Xavier NX — a purpose-built edge AI module designed for embedded applications:

| NVIDIA Jetson Xavier NX | Mythic M1076 | |

|---|---|---|

| AI Performance (INT8) | 21 TOPS | 26 TOPS |

| Power consumption | 10–15W | 3–4W |

| TOPS / Watt | 1.4–2.1 | ~7 |

| Memory | 8 GB LPDDR4x (shared, external) | On-chip (no external DRAM) |

| Price | ~$400 (module only) | Comparable to digital NPU |

| Module size | 69.6 × 45 mm (SO-DIMM) | 19 × 15.5 mm (BGA) |

| Board area | ~31 cm² (+ carrier board required) | ~3 cm² (mounts directly on PCB) |

| Cooling | Heatsink + fan recommended | No fan needed |

| Additional HW needed | Carrier board, DRAM, storage, PSU | None (single chip) |

| Suitable for banknote machine | Difficult | Yes |

The M1076 is also available as an M.2 card (22 × 80 mm), making it easy to integrate into existing systems via standard PCIe slots — the same interface used by SSDs in laptops.

Both deliver comparable raw AI performance (21 vs. 26 TOPS). But the decisive factors are price and size — the two things that determine whether AI can actually fit inside a banknote counting machine:

- Price — The Xavier NX module alone costs ~$400, and still requires a carrier board, external storage, and a dedicated power supply. The total system cost would exceed the selling price of many banknote counting machines. The M1076 costs a fraction of that.

- Size — The Xavier NX module measures 69.6 × 45 mm and needs a separate carrier board (~100 × 80 mm). The M1076 is just 19 × 15.5 mm and mounts directly on the existing PCB — no additional board required.

- System simplicity — The Xavier NX is essentially a small computer with its own ecosystem (OS, DRAM, storage). The M1076 is a single chip that connects via PCIe to the existing Zynq 1EG — it integrates seamlessly into the machine's existing architecture.

In short, the Xavier NX is a powerful AI module, but it is too expensive and too bulky for a compact banknote counting machine. The M1076 delivers more AI performance (26 vs. 21 TOPS) at a fraction of the price and size — making embedded AI practical for the first time in this industry.

Why This Matters for Banknote Processing

Before the M1076, there was no practical way to run a deep neural network inside a banknote counting machine. High-end edge AI modules like NVIDIA's Jetson cost more than the machine itself and are far too large to fit inside the enclosure. The M1076 changes this equation entirely.

Removing the Mechanical Tape Detector

The M1076's affordable price and tiny footprint enabled a breakthrough in machine design: we replaced the bulky, expensive mechanical tape detector with the new Advanced CIS + NPU + Zynq 1EG platform. The mechanical tape detector — a spring-based sensor assembly — occupied significant space inside the machine and added considerable cost. By removing it, the freed space and budget were redirected to the AI platform.

The result: a machine that is not only better at tape detection (targeting near 100% vs. 64.58% with the mechanical sensor), but also gains full fitness sorting, advanced counterfeit detection, and OCR — all from the same AI platform, in a single forward pass.

Full Fitness in a Value Counter Form Factor

This is perhaps the most significant implication: the compact size and reasonable cost of the M1076 make it possible to embed full fitness sorting — previously available only in large, expensive sorters — into a value counter-sized machine. A machine that was once a simple counter can now classify every banknote by fitness, denomination, authenticity, and tape — without growing in size or moving to a higher price category.

Want to understand why image-based banknote analysis is so challenging — and how deep learning overcomes the limitations of the old approach? Read more in Deep Learning →

Reference Videos

For a deeper understanding of Mythic's analog compute-in-memory technology:

Mythic: Analog AI Processor

Overview of analog compute-in-memory architecture

Mythic AI Chip Explained

How analog computation enables edge AI

Inside Mythic's NPU

Technical deep-dive into the M1076

Mythic vs Digital NPU

Comparison with digital alternatives